How To Write Fast, Memory-Efficient JavaScript

JavaScript engines such as Google’s V8 (Chrome, Node) are specifically designed for the fast execution of large JavaScript applications. As you develop, if you care about memory usage and performance, you should be aware of some of what’s going on in your user’s browser’s JavaScript engine behind the scenes.

Whether it’s V8, SpiderMonkey (Firefox), Carakan (Opera), Chakra (IE) or something else, doing so can help you better optimize your applications. That’s not to say one should optimize for a single browser or engine. Never do that.

You should, however, ask yourself questions such as:

- Is there anything I could be doing more efficiently in my code?

- What (common) optimizations do popular JavaScript engines make?

- What is the engine unable to optimize for, and is the garbage collector able to clean up what I’m expecting it to?

There are many common pitfalls when it comes to writing memory-efficient and fast code, and in this article we’re going to explore some test-proven approaches for writing code that performs better.

So, How Does JavaScript Work In V8?

While it’s possible to develop large-scale applications without a thorough understanding of JavaScript engines, any car owner will tell you they’ve looked under the hood at least once. As Chrome is my browser of choice, I’m going to talk a little about its JavaScript engine. V8 is made up of a few core pieces.

- A base compiler, which parses your JavaScript and generates native machine code before it is executed, rather than executing bytecode or simply interpreting it. This code is initially not highly optimized.

- V8 represents your objects in an object model. Objects are represented as associative arrays in JavaScript, but in V8 they are represented with hidden classes, which are an internal type system for optimized lookups.

- The runtime profiler monitors the system being run and identifies “hot” functions (i.e. code that ends up spending a long time running).

- An optimizing compiler recompiles and optimizes the “hot” code identified by the runtime profiler, and performs optimizations such as inlining (i.e. replacing a function call site with the body of the callee).

- V8 supports deoptimization, meaning the optimizing compiler can bail out of code generated if it discovers that some of the assumptions it made about the optimized code were too optimistic.

- It has a garbage collector. Understanding how it works can be just as important as the optimized JavaScript.

Garbage Collection

Garbage collection is a form of memory management. It’s where we have the notion of a collector which attempts to reclaim memory occupied by objects that are no longer being used. In a garbage-collected language such as JavaScript, objects that are still referenced by your application are not cleaned up.

Manually de-referencing objects is not necessary in most cases. By simply putting the variables where they need to be (ideally, as local as possible, i.e. inside the function where they are used versus an outer scope), things should just work.

It’s not possible to force garbage collection in JavaScript. You wouldn’t want to do this, because the garbage collection process is controlled by the runtime, and it generally knows best when things should be cleaned up.

De-Referencing Misconceptions

In quite a few discussions online about reclaiming memory in JavaScript, the delete keyword is brought up, as although it was supposed to be used for just removing keys from a map, some developers think you can force de-referencing using it. Avoid using delete if you can. In the below example, delete o.x does a lot more harm than good behind the scenes, as it changes o’s hidden class and makes it a generic slow object.

var o = { x: 1 };

delete o.x; // true

o.x; // undefinedThat said, you are almost certain to find references to delete in many popular JavaScript libraries - it does have a purpose in the language. The main takeaway here is to avoid modifying the structure of hot objects at runtime. JavaScript engines can detect such “hot” objects and attempt to optimize them. This is easier if the object’s structure doesn’t heavily change over its lifetime and delete can trigger such changes.

There are also misconceptions about how null works. Setting an object reference to null doesn’t “null” the object. It sets the object reference to null. Using o.x = null is better than using delete, but it’s probably not even necessary.

var o = { x: 1 };

o = null;

o; // null

o.x // TypeErrorIf this reference was the last reference to the object, the object is then eligible for garbage collection. If the reference was not the last reference to the object, the object is reachable and will not be garbage collected.

Another important note to be aware of is that global variables are not cleaned up by the garbage collector during the life of your page. Regardless of how long the page is open, variables scoped to the JavaScript runtime global object will stick around.

var myGlobalNamespace = {};Globals are cleaned up when you refresh the page, navigate to a different page, close tabs or exit your browser. Function-scoped variables get cleaned up when a variable falls out of scope. When functions have exited and there aren’t any more references to it, the variable gets cleaned up.

Rules Of Thumb

To give the garbage collector a chance to collect as many objects as possible as early as possible, don’t hold on to objects you no longer need. This mostly happens automatically; here are a few things to keep in mind.

- As mentioned earlier, a better alternative to manual de-referencing is to use variables with an appropriate scope. I.e. instead of a global variable that’s nulled out, just use a function-local variable that goes out of scope when it’s no longer needed. This means cleaner code with less to worry about.

- Ensure that you’re unbinding event listeners where they are no longer required, especially when the DOM objects they’re bound to are about to be removed

- If you’re using a data cache locally, make sure to clean that cache or use an aging mechanism to avoid large chunks of data being stored that you’re unlikely to reuse

Functions

Next, let’s look at functions. As we’ve already said, garbage collection works by reclaiming blocks of memory (objects) which are no longer reachable. To better illustrate this, here are some examples.

function foo() {

var bar = new LargeObject();

bar.someCall();

}When foo returns, the object which bar points to is automatically available for garbage collection, because there is nothing left that has a reference to it.

Compare this to:

function foo() {

var bar = new LargeObject();

bar.someCall();

return bar;

}

// somewhere else

var b = foo();We now have a reference to the object which survives the call and persists until the caller assigns something else to b (or b goes out of scope).

Closures

When you see a function that returns an inner function, that inner function will have access to the outer scope even after the outer function is executed. This is basically a closure — an expression which can work with variables set within a specific context. For example:

function sum (x) {

function sumIt(y) {

return x + y;

};

return sumIt;

}

// Usage

var sumA = sum(4);

var sumB = sumA(3);

console.log(sumB); // Returns 7The function object created within the execution context of the call to sum can’t be garbage collected, as it’s referenced by a global variable and is still very much accessible. It can still be executed via sumA(n).

Let’s look at another example. Here, can we access largeStr?

var a = function () {

var largeStr = new Array(1000000).join('x');

return function () {

return largeStr;

};

}();Yes, we can, via a(), so it’s not collected. How about this one?

var a = function () {

var smallStr = 'x';

var largeStr = new Array(1000000).join('x');

return function (n) {

return smallStr;

};

}();We can’t access it anymore and it’s a candidate for garbage collection.

Timers

One of the worst places to leak is in a loop, or in setTimeout()/setInterval(), but this is quite common.

Consider the following example.

var myObj = {

callMeMaybe: function () {

var myRef = this;

var val = setTimeout(function () {

console.log('Time is running out!');

myRef.callMeMaybe();

}, 1000);

}

};If we then run:

myObj.callMeMaybe();to begin the timer, we can see every second “Time is running out!” If we then run:

myObj = null;The timer will still fire. myObj won’t be garbage collected as the closure passed to setTimeout has to be kept alive in order to be executed. In turn, it holds references to myObj as it captures myRef. This would be the same if we’d passed the closure to any other function, keeping references to it.

It is also worth keeping in mind that references inside a setTimeout/setInterval call, such as functions, will need to execute and complete before they can be garbage collected.

Be Aware Of Performance Traps

It’s important never to optimize code until you actually need to. This can’t be stressed enough. It’s easy to see a number of micro-benchmarks showing that N is more optimal than M in V8, but test it in a real module of code or in an actual application, and the true impact of those optimizations may be much more minimal than you were expecting.

Let’s say we want to create a module which:

- Takes a local source of data containing items with a numeric ID,

- Draws a table containing this data,

- Adds event handlers for toggling a class when a user clicks on any cell.

There are a few different factors to this problem, even though it’s quite straightforward to solve. How do we store the data? How do we efficiently draw the table and append it to the DOM? How do we handle events on this table optimally?

A first (naive) take on this problem might be to store each piece of available data in an object which we group into an array. One might use jQuery to iterate through the data and draw the table, then append it to the DOM. Finally, one might use event binding for adding the click behavior we desire.

Note: This is NOT what you should be doing

var moduleA = function () {

return {

data: dataArrayObject,

init: function () {

this.addTable();

this.addEvents();

},

addTable: function () {

for (var i = 0; i < rows; i++) {

$tr = $('<tr></tr>');

for (var j = 0; j < this.data.length; j++) {

$tr.append('<td>' + this.data[j]['id'] + '</td>');

}

$tr.appendTo($tbody);

}

},

addEvents: function () {

$('table td').on('click', function () {

$(this).toggleClass('active');

});

}

};

}();Simple, but it gets the job done.

In this case however, the only data we’re iterating are IDs, a numeric property which could be more simply represented in a standard array. Interestingly, directly using DocumentFragment and native DOM methods are more optimal than using jQuery (in this manner) for our table generation, and of course, event delegation is typically more performant than binding each td individually.

Note that jQuery does use DocumentFragment internally behind the scenes, but in our example, the code is calling append() within a loop and each of these calls has little knowledge of the other so it may not be able to optimize for this example. This should hopefully not be a pain point, but be sure to benchmark your own code to be sure.

In our case, adding in these changes results in some good (expected) performance gains. Event delegation provides decent improvement over simply binding, and opting for documentFragment was a real booster.

var moduleD = function () {

return {

data: dataArray,

init: function () {

this.addTable();

this.addEvents();

},

addTable: function () {

var td, tr;

var frag = document.createDocumentFragment();

var frag2 = document.createDocumentFragment();

for (var i = 0; i < rows; i++) {

tr = document.createElement('tr');

for (var j = 0; j < this.data.length; j++) {

td = document.createElement('td');

td.appendChild(document.createTextNode(this.data[j]));

frag2.appendChild(td);

}

tr.appendChild(frag2);

frag.appendChild(tr);

}

tbody.appendChild(frag);

},

addEvents: function () {

$('table').on('click', 'td', function () {

$(this).toggleClass('active');

});

}

};

}();We might then look to other ways of improving performance. You may have read somewhere that using the prototypal pattern is more optimal than the module pattern (we confirmed it wasn’t earlier), or heard that using JavaScript templating frameworks are highly optimized. Sometimes they are, but use them because they make for readable code. Also, precompile!. Let’s test and find out how true this hold in practice.

moduleG = function () {};

moduleG.prototype.data = dataArray;

moduleG.prototype.init = function () {

this.addTable();

this.addEvents();

};

moduleG.prototype.addTable = function () {

var template = _.template($('#template').text());

var html = template({'data' : this.data});

$tbody.append(html);

};

moduleG.prototype.addEvents = function () {

$('table').on('click', 'td', function () {

$(this).toggleClass('active');

});

};

var modG = new moduleG();As it turns out, in this case the performance benefits are negligible. Opting for templating and prototypes didn’t really offer anything more than what we had before. That said, performance isn’t really the reason modern developers use either of these things — it’s the readability, inheritance model and maintainability they bring to your codebase.

More complex problems include efficiently drawing images using canvas and manipulating pixel data with or without typed arrays

Always give micro-benchmarks a close lookover before exploring their use in your application. Some of you may recall the JavaScript templating shoot-off and the extended shoot-off that followed. You want to make sure that tests aren’t being impacted by constraints you’re unlikely to see in real world applications — test optimizations together in actual code.

V8 Optimization Tips

Whilst detailing every V8 optimization is outside the scope of this article, there are certainly many tips worth noting. Keep these in mind and you’ll reduce your chances of writing unperformant code.

- Certain patterns will cause V8 to bail out of optimizations. A try-catch, for example, will cause such a bailout. For more information on what functions can and can’t be optimized, you can use

--trace-opt file.jswith the d8 shell utility that comes with V8. - If you care about speed, try very hard to keep your functions monomorphic, i.e. make sure that variables (including properties, arrays and function parameters) only ever contain objects with the same hidden class. For example, don’t do this:

function add(x, y) {

return x+y;

}

add(1, 2);

add('a','b');

add(my_custom_object, undefined);- Don’t load from uninitialized or deleted elements. This won’t make a difference in output, but it will make things slower.

- Don’t write enormous functions, as they are more difficult to optimize

For more tips, watch Daniel Clifford’s Google I/O talk Breaking the JavaScript Speed Limit with V8 as it covers these topics well. Optimizing For V8 — A Series is also worth a read.

Objects Vs. Arrays: Which Should I Use?

- If you want to store a bunch of numbers, or a list of objects of the same type, use an array.

- If what you semantically need is an object with a bunch of properties (of varying types), use an object with properties. That’s pretty efficient in terms of memory, and it’s also pretty fast.

- Integer-indexed elements, regardless of whether they’re stored in an array or an object, are much faster to iterate over than object properties.

- Properties on objects are quite complex: they can be created with setters, and with differing enumerability and writability. Items in arrays aren’t able to be customized as heavily — they either exist or they don’t. At an engine level, this allows for more optimization in terms of organizing the memory representing the structure. This is particularly beneficial when the array contains numbers. For example, when you need vectors, don’t define a class with properties x, y, z; use an array instead..

There’s really only one major difference between objects and arrays in JavaScript, and that’s the arrays’ magic length property. If you’re keeping track of this property yourself, objects in V8 should be just as fast as arrays.

Tips When Using Objects

- Create objects using a constructor function. This ensures that all objects created with it have the same hidden class and helps avoid changing these classes.

- There are no restrictions on the number of different object types you can use in your application or on their complexity (within reason: long prototype chains tend to hurt, and objects with only a handful of properties get a special representation that’s a bit faster than bigger objects). For “hot” objects, try to keep the prototype chains short and the field count low.

Object Cloning

Object cloning is a common problem for app developers. While it’s possible to benchmark how well various implementations work with this type of problem in V8, be very careful when copying anything. Copying big things is generally slow — don’t do it. for..in loops in JavaScript are particularly bad for this, as they have a devilish specification and will likely never be fast in any engine for arbitrary objects.

When you absolutely do need to copy objects in a performance-critical code path (and you can’t get out of this situation), use an array or a custom “copy constructor” function which copies each property explicitly. This is probably the fastest way to do it:

function clone(original) {

this.foo = original.foo;

this.bar = original.bar;

}

var copy = new clone(original);Cached Functions in the Module Pattern Caching your functions when using the module pattern can lead to performance improvements. See below for an example where the variation you’re probably used to seeing is slower as it forces new copies of the member functions to be created all the time.

Here is a test of prototype versus module pattern performance

// Prototypal pattern

Klass1 = function () {}

Klass1.prototype.foo = function () {

log('foo');

}

Klass1.prototype.bar = function () {

log('bar');

}

// Module pattern

Klass2 = function () {

var foo = function () {

log('foo');

},

bar = function () {

log('bar');

};

return {

foo: foo,

bar: bar

}

}

// Module pattern with cached functions

var FooFunction = function () {

log('foo');

};

var BarFunction = function () {

log('bar');

};

Klass3 = function () {

return {

foo: FooFunction,

bar: BarFunction

}

}

// Iteration tests

// Prototypal

var i = 1000,

objs = [];

while (i--) {

var o = new Klass1()

objs.push(new Klass1());

o.bar;

o.foo;

}

// Module pattern

var i = 1000,

objs = [];

while (i--) {

var o = Klass2()

objs.push(Klass2());

o.bar;

o.foo;

}

// Module pattern with cached functions

var i = 1000,

objs = [];

while (i--) {

var o = Klass3()

objs.push(Klass3());

o.bar;

o.foo;

}

// See the test for full detailsNote: If you don’t require a class, avoid the trouble of creating one. Here’s an example of how to gain performance boosts by remoxing the class overhead altogether.

Tips When Using Arrays

Next let’s look at a few tips for arrays. In general, don’t delete array elements. It would make the array transition to a slower internal representation. When the key set becomes sparse, V8 will eventually switch elements to dictionary mode, which is even slower.

Array Literals Array literals are useful because they give a hint to the VM about the size and type of the array. They’re typically good for small to medium sized arrays.

// Here V8 can see that you want a 4-element array containing numbers:

var a = [1, 2, 3, 4];

// Don't do this:

a = []; // Here V8 knows nothing about the array

for(var i = 1; i <= 4; i++) {

a.push(i);

}Storage of Single Types Vs. Mixed Types

It’s never a good idea to mix values of different types (e.g. numbers, strings, undefined or true/false) in the same array (i.e. var arr = [1, “1”, undefined, true, “true”])

Test of type inference performance

As we can see from the results, the array of ints is the fastest.

Sparse Arrays vs. Full Arrays When you use sparse arrays, be aware that accessing elements in them is much slower than in full arrays. That’s because V8 doesn’t allocate a flat backing store for the elements if only a few of them are used. Instead, it manages them in a dictionary, which saves space, but costs time on access.

Test of sparse arrays versus full arrays.

The full array sum and sum of all elements on an array without zeros were actually the fastest. Whether the full array contains zeroes or not should not make a difference.

Packed Vs. Holey Arrays

Avoid “holes” in an array (created by deleting elements or a[x] = foo with x > a.length). Even if only a single element is deleted from an otherwise “full” array, things will be much slower.

Test of packed versus holey arrays.

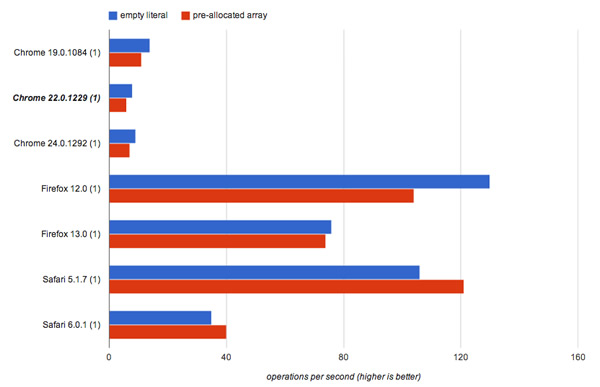

Pre-allocating Arrays Vs. Growing As You Go Don’t pre-allocate large arrays (i.e. greater than 64K elements) to their maximum size, instead grow as you go. Before we get to the performance tests for this tip, keep in mind that this is specific to only some JavaScript engines.

Nitro (Safari) actually treats pre-allocated arrays more favorably. However, in other engines (V8, SpiderMonkey), not pre-allocating is more efficient.

// Empty array

var arr = [];

for (var i = 0; i < 1000000; i++) {

arr[i] = i;

}

// Pre-allocated array

var arr = new Array(1000000);

for (var i = 0; i < 1000000; i++) {

arr[i] = i;

}Optimizing Your Application

In the world of Web applications, speed is everything. No user wants a spreadsheet application to take seconds to sum up an entire column or a summary of their messages to take a minute before it’s ready. This is why squeezing every drop of extra performance you can out of code can sometimes be critical.

While understanding and improving your application performance is useful, it can also be difficult. We recommend the following steps to fix performance pain points:

- Measure it: Find the slow spots in your application (~45%)

- Understand it: Find out what the actual problem is (~45%)

- Fix it! (~10%)

Some of the tools and techniques recommended below can assist with this process.

Benchmarking

There are many ways to run benchmarks on JavaScript snippets to test their performance — the general assumption being that benchmarking is simply comparing two timestamps. One such pattern was pointed out by the jsPerf team, and happens to be used in SunSpider’s and Kraken’s benchmark suites:

var totalTime,

start = new Date,

iterations = 1000;

while (iterations--) {

// Code snippet goes here

}

// totalTime → the number of milliseconds taken

// to execute the code snippet 1000 times

totalTime = new Date - start;Here, the code to be tested is placed within a loop and run a set number of times (e.g. six). After this, the start date is subtracted from the end date to find the time taken to perform the operations in the loop.

However, this oversimplifies how benchmarking should be done, especially if you want to run the benchmarks in multiple browsers and environments. Garbage collection itself can have an impact on your results. Even if you’re using a solution like window.performance, you still have to account for these pitfalls.

Regardless of whether you are simply running benchmarks against parts of your code, writing a test suite or coding a benchmarking library, there’s a lot more to JavaScript benchmarking than you might think. For a more detailed guide to benchmarking, I highly recommend reading JavaScript Benchmarking by Mathias Bynens and John-David Dalton.

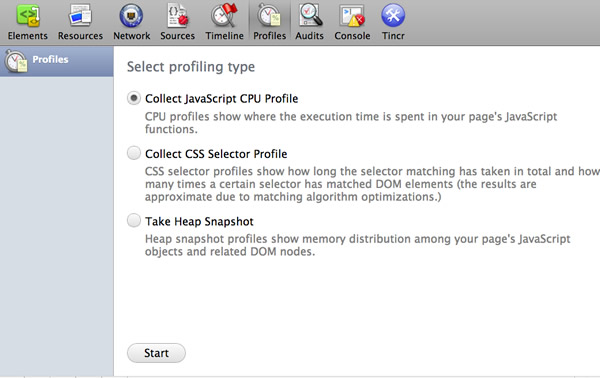

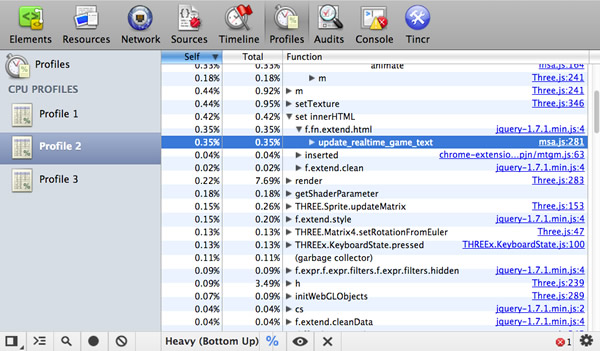

Profiling

The Chrome Developer Tools have good support for JavaScript profiling. You can use this feature to detect what functions are eating up the most of your time so that you can then go optimize them. This is important, as even small changes to your codebase can have serious impacts on your overall performance.

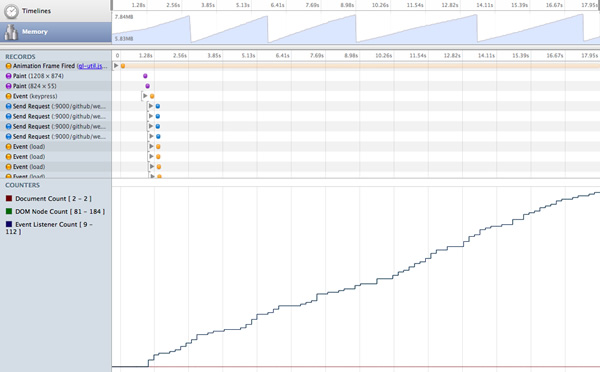

Profiling starts with obtaining a baseline for your code’s current performance, which can be discovered using the Timeline. This will tell us how long our code took to run. The Profiles tab then gives us a better view into what’s happening in our application. The JavaScript CPU profile shows us how much CPU time is being used by our code, the CSS selector profile shows us how much time is spent processing selectors and Heap snapshots show how much memory is being used by our objects.

Using these tools, we can isolate, tweak and reprofile to gauge whether changes we’re making to specific functions or operations are improving performance.

For a good introduction to profiling, read JavaScript Profiling With The Chrome Developer Tools, by Zack Grossbart.

Tip: Ideally, you want to ensure that your profiling isn’t being affected by extensions or applications you’ve installed, so run Chrome using the –user-data-dir <empty_directory> flag. Most of the time, this approach to optimization testing should be enough, but there are times when you need more. This is where V8 flags can be of help.

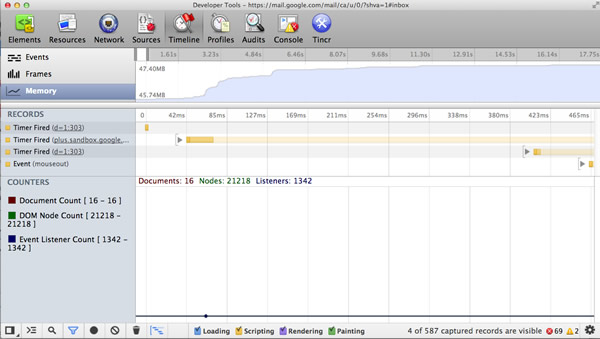

Avoiding Memory Leaks — Three Snapshot Techniques for Discovery

Internally at Google, the Chrome Developer Tools are heavily used by teams such as Gmail to help us discover and squash memory leaks.

Some of the memory statistics that our teams care about include private memory usage, JavaScript heap size, DOM node counts, storage clearing, event listener counts and what’s going on with garbage collection. For those familiar with event-driven architectures, you might be interested to know that one of the most common issues we used to have were listen()’s without unlisten()’s (Closure) and missing dispose()’s for objects that create event listeners.

Luckily the DevTools can help locate some of these issues, and Loreena Lee has a fantastic presentation available documenting the “3 snapshot” technique for finding leaks within the DevTools that I can’t recommend reading through enough.

The gist of the technique is that you record a number of actions in your application, force a garbage collection, check if the number of DOM nodes doesn’t return to your expected baseline and then analyze three heap snapshots to determine if you have a leak.

Memory Management in Single-Page Applications

Memory management is quite important when writing modern single-page applications (e.g. AngularJS, Backbone, Ember) as they almost never get refreshed. This means that memory leaks can become apparent quite quickly. This is a huge trap on mobile single-page applications, because of limited memory, and on long-running applications like email clients or social networking applications. With great power comes great responsibility.

There are various ways to prevent this. In Backbone, ensure you always dispose old views and references using dispose() (currently available in Backbone (edge)). This function was recently added, and removes any handlers added in the view’s ‘events’ object, as well as any collection or model listeners where the view is passed as the third argument (callback context). dispose() is also called by the view’s remove(), taking care of the majority of basic memory cleanup needs when the element is cleared from the screen. Other libraries like Ember clean up observers when they detect that elements have been removed from view to avoid memory leaks.

Some sage advice from Derick Bailey:

“Other than being aware of how events work in terms of references, just follow the standard rules for manage memory in JavaScript and you’ll be fine. If you are loading data in to a Backbone collection full of User objects you want that collection to be cleaned up so it’s not using anymore memory, you must remove all references to the collection and the individual objects in it. Once you remove all references, things will be cleaned up. This is just the standard JavaScript garbage collection rule.”

In his article, Derick covers many of the common memory pitfalls when working with Backbone.js and how to fix them.

There is also a helpful tutorial available for debugging memory leaks in Node by Felix Geisendörfer worth reading, especially if it forms a part of your broader SPA stack.

Minimizing Reflows

When a browser has to recalculate the positions and geometrics of elements in a document for the purpose of re-rendering it, we call this reflow. Reflow is a user-blocking operation in the browser, so it’s helpful to understand how to improve reflow time.

You should batch methods that trigger reflow or that repaint, and use them sparingly. It’s important to process off DOM where possible. This is possible using DocumentFragment, a lightweight document object. Think of it as a way to extract a portion of a document’s tree, or create a new “fragment” of a document. Rather than constantly adding to the DOM using nodes, we can use document fragments to build up all we need and only perform a single insert into the DOM to avoid excessive reflow.

For example, let’s write a function that adds 20 divs to an element. Simply appending each new div directly to the element could trigger 20 reflows.

function addDivs(element) {

var div;

for (var i = 0; i < 20; i ++) {

div = document.createElement('div');

div.innerHTML = 'Heya!';

element.appendChild(div);

}

}To work around this issue, we can use DocumentFragment, and instead, append each of our new divs to this. When appending to the DocumentFragment with a method like appendChild, all of the fragment’s children are appended to the element triggering only one reflow.

function addDivs(element) {

var div;

// Creates a new empty DocumentFragment.

var fragment = document.createDocumentFragment();

for (var i = 0; i < 20; i ++) {

div = document.createElement('a');

div.innerHTML = 'Heya!';

fragment.appendChild(div);

}

element.appendChild(fragment);

}You can read more about this topic at Make the Web Faster, JavaScript Memory Optimization and Finding Memory Leaks.

JavaScript Memory Leak Detector

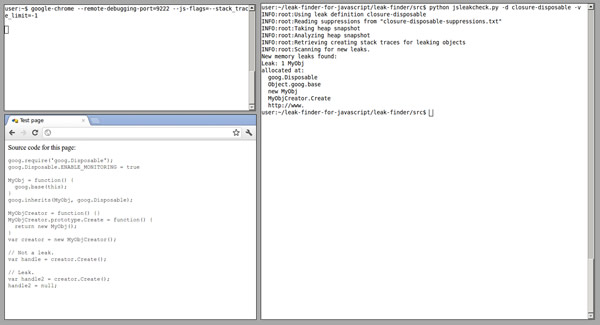

To help discover JavaScript memory leaks, two of my fellow Googlers (Marja Hölttä and Jochen Eisinger) developed a tool that works with the Chrome Developer Tools (specifically, the remote inspection protocol), and retrieves heap snapshots and detects what objects are causing leaks.

There’s a whole post on how to use the tool, and I encourage you to check it out or view the Leak Finder project page.

Some more information: In case you’re wondering why a tool like this isn’t already integrated with our Developer Tools, the reason is twofold. It was originally developed to help us catch some specific memory scenarios in the Closure Library, and it makes more sense as an external tool (or maybe even an extension if we get a heap profiling extension API in place).

V8 Flags for Debugging Optimizations & Garbage Collection

Chrome supports passing a number of flags directly to V8 via the js-flags flag to get more detailed output about what the engine is optimizing. For example, this traces V8 optimizations:

"/Applications/Google Chrome/Google Chrome" --js-flags="--trace-opt --trace-deopt"Windows users will want to run chrome.exe –js-flags=“–trace-opt –trace-deopt”

When developing your application, the following V8 flags can be used.

trace-opt- log names of optimized functions and show where the optimizer is skipping code because it can’t figure something out.trace-deopt- log a list of code it had to deoptimize while running.trace-gc- logs a tracing line on each garbage collection.

V8’s tick-processing scripts mark optimized functions with an * (asterisk) and non-optimized functions with ~ (tilde).

If you’re interested in learning more about V8’s flags and how V8’s internals work in general, I strongly recommend looking through Vyacheslav Egorov’s excellent post on V8 internals, which summarizes the best resources available on this at the moment.

High-Resolution Time and Navigation Timing API

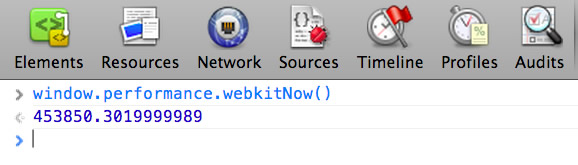

High Resolution Time (HRT) is a JavaScript interface providing the current time in sub-millisecond resolution that isn’t subject to system clock skews or user adjustments. Think of it as a way to measure more precisely than we’ve previously had with new Date and Date.now(). This is helpful when we’re writing performance benchmarks.

HRT is currently available in Chrome (stable) as window.performance.webkitNow(), but the prefix is dropped in Chrome Canary, making it available via window.performance.now(). Paul Irish has written more about HRT in a post on HTML5Rocks.

So, we now know the current time, but what if we wanted an API for accurately measuring performance on the web?

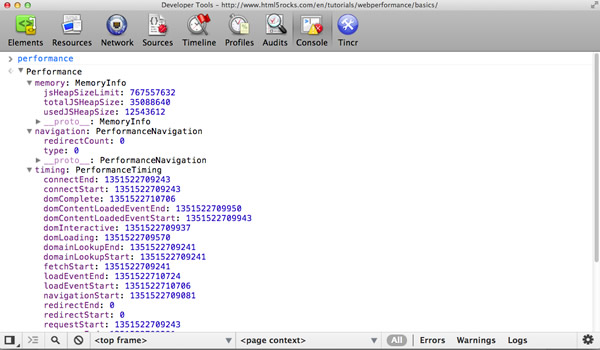

Well, one is now also available in the Navigation Timing API. This API provides a simple way to get accurate and detailed time measurements that are recorded while a webpage is loaded and presented to the user. Timing information is exposed via window.performance.timing, which you can simply use in the console:

Looking at the data above, we can extract some very useful information. For example, network latency is responseEnd-fetchStart, the time taken for a page load once it’s been received from the server is loadEventEnd-responseEnd and the time taken to process between navigation and page load is loadEventEnd-navigationStart.

As you can see above, a perfomance.memory property is also available that gives access to JavaScript memory usage data such as the total heap size.

For more details on the Navigation Timing API, read Sam Dutton’s great article Measuring Page Load Speed With Navigation Timing.

about:memory and about:tracing

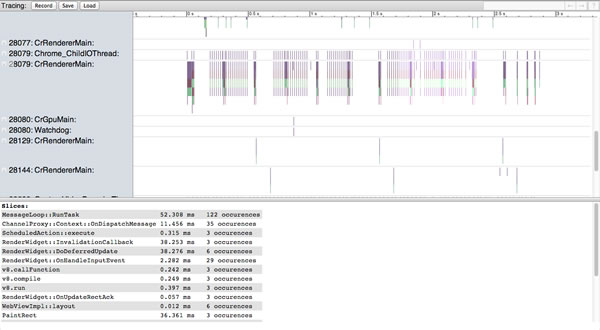

about:tracing in Chrome offers an intimate view of the browser’s performance, recording all of Chrome’s activities across every thread, tab and process.

About:Tracing offers an intimate view of the browser’s performance.What’s really useful about this tool is that it allows you to capture profiling data about what Chrome is doing under the hood, so you can properly adjust your JavaScript execution, or optimize your asset loading.

Lilli Thompson has an excellent write-up for games developers on using about:tracing to profile WebGL games. The write-up is also useful for general JavaScripters.

Navigating to about:memory in Chrome is also useful as it shows the exact amount of memory being used by each tab, which is helpful for tracking down potential leaks.

Conclusion

As we’ve seen, there are many hidden performance gotchas in the world of JavaScript engines, and no silver bullet available to improve performance. It’s only when you combine a number of optimizations in a (real-world) testing environment that you can realize the largest performance gains. But even then, understanding how engines interpret and optimize your code can give you insights to help tweak your applications.

Measure It. Understand it. Fix it. Rinse and repeat.

Remember to care about optimization, but stop short of opting for micro-optimization at the cost of convenience. For example, some developers opt for .forEach and Object.keys over for and for in loops, even though they’re slower, for the convenience of being able to scope. Do make sanity calls on what optimizations your application absolutely needs and which ones it could live without.

Also, be aware that although JavaScript engines continue to get faster, the next real bottleneck is the DOM. Reflows and repaints are just as important to minimize, so remember to only touch the DOM if it’s absolutely required. And do care about networking. HTTP requests are precious, especially on mobile, and you should be using HTTP caching to reduce the size of assets.

Keeping all of these in mind will ensure that you get the most out of the information from this post. I hope you found it helpful!

Credits

This article was reviewed by Jakob Kummerow, Michael Starzinger, Sindre Sorhus, Mathias Bynens, John-David Dalton and Paul Irish.

Image source of picture on front page.

Further Reading

- Front-End Peformance Checklist 2019

- How To Make Your Websites Faster On Mobile Devices

- Getting Ready For HTTP/2

- Everything You Need To Know About AMP

Register Free Now

Register Free Now

Celebrating 10 million developers

Celebrating 10 million developers SurveyJS: White-Label Survey Solution for Your JS App

SurveyJS: White-Label Survey Solution for Your JS App